Your AI Skills Just Hit Their Expiration Date

The skills you just mastered are already obsolete—learn to surf the ever-expanding frontier where humans and AI collide, or get left behind.

Your AI Skills Just Hit Their Expiration Date (Welcome to Frontier Operations)

Remember when learning PowerPoint was a career skill? When "computer literacy" meant knowing Windows 95? Those skills had finish lines. You could master them, put them on your resume, and coast for years. The AI revolution just broke that pattern forever.

Every workforce skill in human history had a destination. Literacy, numeracy, even coding. You learned it, mastered it, moved on. But AI capabilities expand like a bubble, and here's the kicker: as the bubble grows, the surface area where humans and AI collaborate actually increases. More AI capability means more human opportunity, not less. The catch? Your skills now expire on a quarterly cycle with each model release.

Welcome to "Frontier Operations," the meta-skill that's replacing everything you thought you knew about working with AI.

The Death of Set-and-Forget Skills

Traditional AI training feels like teaching someone to drive on a road that rebuilds itself every three months. You perfect your prompt engineering techniques, then GPT-5 drops and half your carefully crafted prompts become irrelevant. You master one model's quirks, then Claude's new reasoning capabilities shift the entire landscape.

The bubble metaphor captures this perfectly. Inside the bubble sits work AI handles reliably. Outside remains purely human territory. But the action happens on the surface, that expanding frontier where human judgment determines what gets handed off and what stays human. As models improve, this boundary doesn't just move; it transforms entirely.

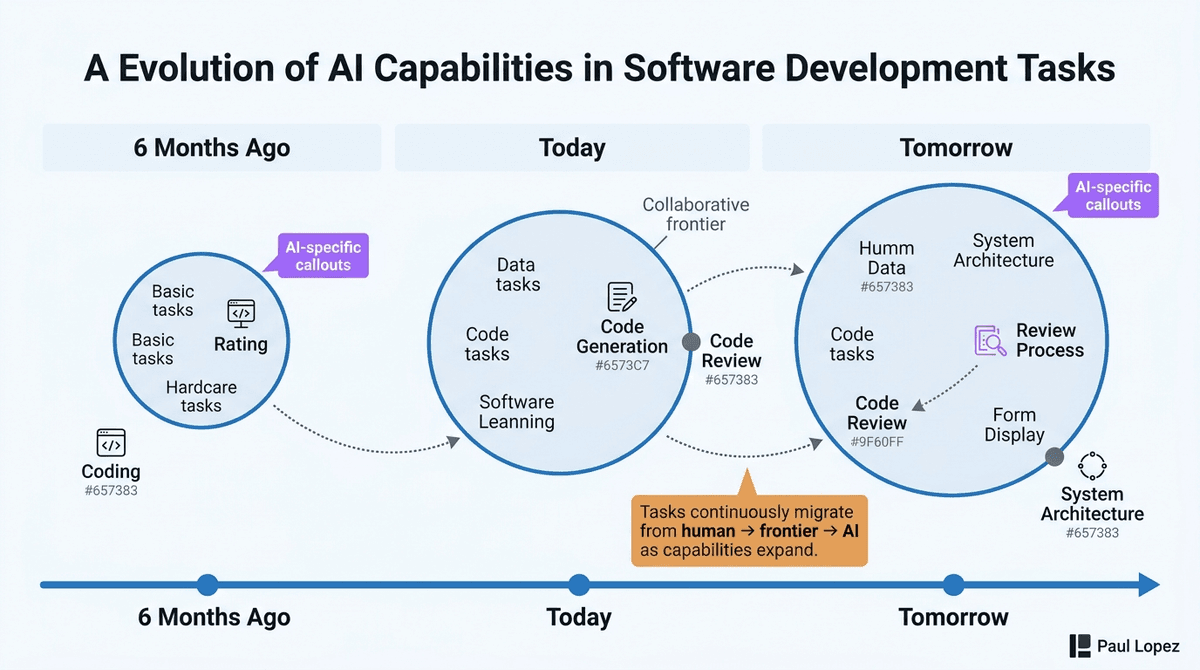

Consider software development. Six months ago, coding was clearly human work. Today, code generation sits inside the bubble while code review occupies the frontier. Tomorrow? Code review might be automated while system architecture becomes the new human territory. The skill isn't learning to prompt better; it's learning to surf these shifting boundaries.

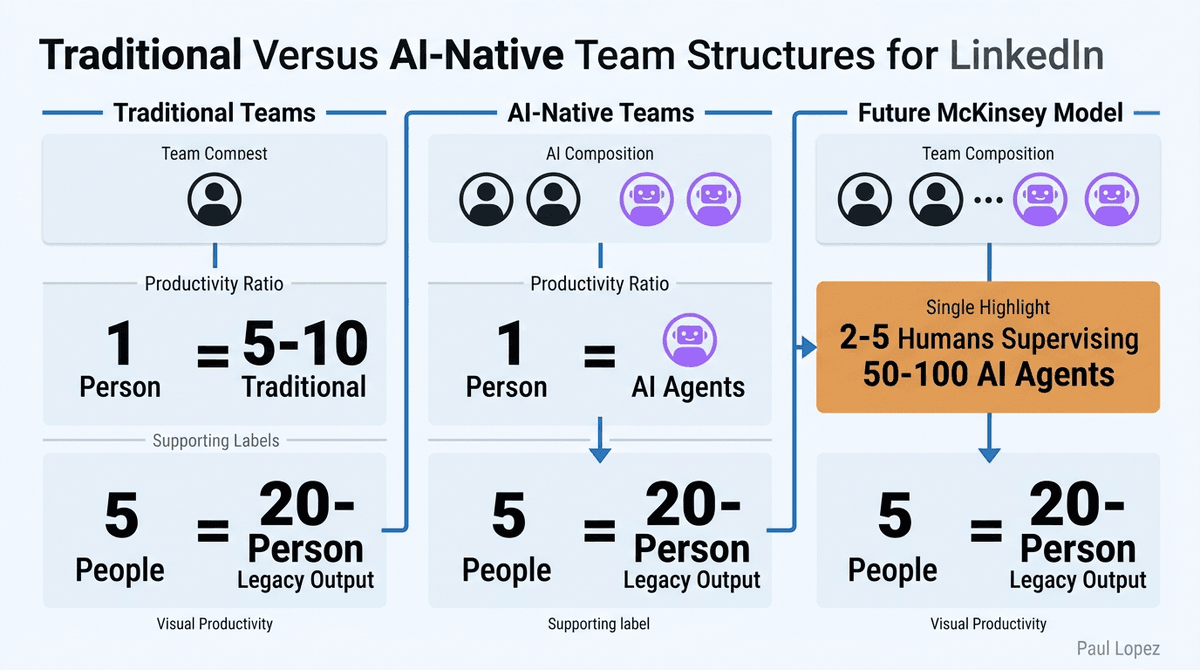

This creates what researchers call "structural skill obsolescence." Unlike previous technological shifts where you could retrain once and adapt, frontier work requires continuous recalibration. McKinsey's latest framework suggests teams will soon structure around 2-5 humans supervising 50-100 AI agents. The question isn't whether you'll work with AI, but whether you can maintain accurate intuition about where that collaboration boundary sits.

Frontier Operations: Five Components of the New Meta-Skill

Boundary Sensing forms the foundation. This means maintaining real-time intuition about what AI can handle versus what needs human oversight. A product manager might let agents draft competitive analysis while reserving stakeholder dynamics for human judgment. The key? Updating this calibration constantly as models improve, not setting it once and forgetting.

Seam Design involves structuring clean transitions between human and agent work phases. A software engineering lead assigns ticket triage to agents but keeps architectural decisions human. As capabilities shift, the workflow itself needs redesign. The best frontier operators think like choreographers, orchestrating smooth handoffs between human insight and machine execution.

Failure Model Maintenance requires understanding the specific texture of how current models fail. Modern AI failures are subtle: 98% accurate output with 2% confidently fabricated details. A corporate counsel might trust agents for boilerplate contract language while manually reviewing every cross-reference between liability provisions. It's like knowing that your GPS is perfect on highways but hopeless in parking garages.

Capability Forecasting means making 6-12 month predictions about boundary movement. Think of it like reading ocean swells, positioning for the next wave without trying to predict exact outcomes. Smart developers see coding moving inside the AI bubble and position themselves for the emerging frontier of code review and system design.

Leverage Calibration optimizes human attention allocation in agent-rich environments. An engineering manager creates hierarchical attention: automated tests handle routine code, human review focuses on billing and data pipelines. It's the difference between being a quality inspector and being a quality systems architect.

Why Timing Creates Exponential Advantages

Here's where it gets interesting. Someone who started developing frontier operations six months ago doesn't just have a head start. They have six months of updated calibration that creates compounding advantages. Their boundary sense is more accurate. Their failure models are more nuanced. Their attention allocation is more sophisticated.

Look at AI-native companies like Cursor or Lovable. They're shipping features at velocities that traditional teams can't match, not because they have better AI models (everyone rents the same compute), but because their teams have developed superior frontier operations. They've learned to structure work around the expanding AI capability bubble rather than fighting it.

The capability gap compounds daily. Traditional training focuses on knowledge transfer, but frontier operations requires experiential calibration. You can't learn boundary sensing from a course; you develop it by tracking where your predictions about AI capability prove wrong. The best frontier operators log "agent surprises" as learning signals. If AI hasn't surprised you recently, you're not operating at the boundary.

This creates a new form of professional development that looks more like athletic training than academic learning. Maximum feedback density matters more than training hours. Practice environments beat courseware. And like physical fitness, frontier operations requires ongoing maintenance, not one-time achievement.

The New Team Operating System

Organizations are discovering that frontier operations enables entirely new team structures. The "Team of One" model puts a single frontier operator with high leverage producing output equivalent to 5-10 person traditional teams. The "Team of Five" combines one deep frontier operator with specialists, shipping at the pace of 20-person legacy structures.

Hiring criteria are shifting dramatically. Traditional signals like credentials and years of experience become less predictive. The key questions: Can they articulate where agents succeed versus fail? Do they have differentiated failure models? Can they redesign workflows when capabilities shift quarterly?

Smart healthcare organizations are already applying this thinking. Clinical teams use AI for initial symptom analysis while reserving patient interaction design for humans. Administrative workflows hand routine insurance processing to agents while keeping complex denial appeals human. The frontier operators excel at designing these human-AI collaboration patterns and updating them as capabilities evolve.

The Economic Stakes

This isn't just about individual career development. Frontier operations will determine which businesses succeed over the next decade and which economies win globally. Success won't come from building better AI models (increasingly commoditized) or having more compute (rentable), but from human capacity to convert those inputs into economic output.

The companies mastering frontier operations now will have insurmountable advantages as AI capabilities continue expanding. They're not just using AI tools; they're developing organizational competencies for continuous adaptation to AI advancement.

For individuals, the message is clear: start building frontier operations skills today. Track where your boundary intuition proves incorrect. Log agent surprises. Experiment with workflow redesigns. If you wait six months, you won't just be behind; you'll be operating with fundamentally less accurate calibration than people who started now.

The AI revolution isn't coming. It arrived quarterly, every quarter, for the foreseeable future. The question isn't whether you'll adapt, but whether you'll develop the meta-skill for continuous adaptation itself.

References

- YouTube Video Analysis: "Why Every AI Skill You Learned 6 Months Ago Is Already Wrong (And What Is Replacing Them)" - Comprehensive framework analysis and implementation strategies

- McKinsey Research Framework: Human-to-agent supervision ratios in emerging AI-integrated team structures