Who Really Owns Your AI's Accumulated Business Knowledge

When your AI learns your company's secrets, who gets to keep them? The $10 billion ownership blind spot hiding in every enterprise contract.

Who Owns What Your AI Learned?

The $10 Billion Question Nobody in Enterprise AI Is Asking

Published on paullopez.ai | Paul Lopez | Enterprise AI

There is a scene in Goodfellas where Henry Hill reflects, "At first, you're this average nobody getting to live like a millionaire. But after a while, it becomes routine." He could have been talking about your AI platform.

Your enterprise deployed an AI agent eighteen months ago. Maybe it handles revenue cycle management. Maybe it triages compliance reviews. Maybe it runs regulatory monitoring across six jurisdictions. Whatever it does, here is what happened in those eighteen months that nobody planned for: the agent learned how your company works.

Not in the abstract. It learned your company. Your escalation patterns. Your exception handling logic. The specific phrasing your regulatory team uses when a filing is ambiguous. The particular supplier who always ships late in Q3. The two physicians whose dictation style requires a completely different documentation approach than everyone else on the medical staff.

That accumulated knowledge is an asset. A significant one. And right now, nobody in your organization can answer a basic question about it: who owns it?

The Question Your Vendor Hopes You Never Ask

Pull up your AI platform contract. Search for "accumulated knowledge." Search for "learned patterns." Search for "behavioral context." Search for "institutional intelligence."

You will find nothing. Or you will find a vaguely worded clause about "usage data" that was drafted for a world where AI tools responded to queries and forgot them immediately. Neither result addresses what is actually happening in your environment today.

What is actually happening is this: your persistent AI agent is developing what I call agentic cognition. It is the body of institutional intelligence that reflects your specific operational context, built up through months of persistent operation. This cognition exists only because this agent has worked inside your environment. It would not exist in a fresh deployment elsewhere. It cannot be easily replicated. And it lives entirely inside a platform you do not control.

Think about that for a moment. Your best people have spent a year and a half teaching an AI how your company works. The AI learned. The knowledge lives on someone else's servers, in someone else's proprietary format, governed by someone else's terms of service.

If that does not make your Chief Legal Officer uncomfortable, they have not been briefed properly.

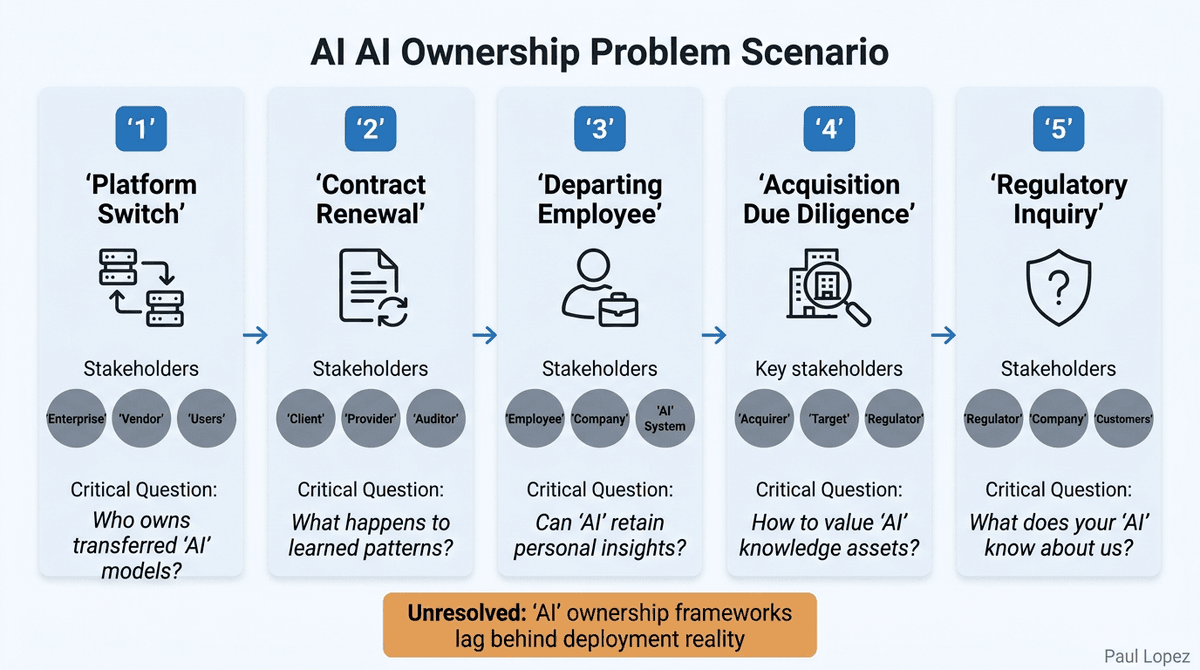

Five Ownership Scenarios, Zero Clear Answers

Let me walk through five scenarios that are playing out in enterprises right now. Each one involves agentic cognition, and in each case, the ownership question is genuinely unresolved.

Scenario 1: The platform switch. Your company decides to move from AI Platform A to Platform B. You can export your data. You can rewrite your prompts. You can reconfigure your integrations. But the eighteen months of accumulated behavioral calibration, the learned patterns, the agentic cognition the agent developed through operation? That does not export. There is no CSV of organizational intelligence. What happens to it?

Scenario 2: The contract renewal. Your platform vendor doubles their price. You want to negotiate from a position of strength. But your negotiating position is undermined by the fact that switching costs are astronomical. Not because of the technology, but because of what the technology has learned about you. The vendor knows this. You might not.

Scenario 3: The departing employee. Your best revenue cycle analyst worked alongside the AI agent for two years. Her judgment, her exception handling instincts, her domain expertise shaped how the agent operates today. She leaves for a competitor. She takes her expertise with her (that is legal). But the AI retains a behavioral model that reflects her specific patterns. Who owns that model? Her? Your company? The platform vendor?

Scenario 4: The acquisition due diligence. A PE firm is evaluating your company. They want to value your AI capabilities. Part of that value is the agentic cognition your agents have developed. But nobody can quantify what that cognition is worth, where it lives, or whether it transfers with the acquisition. The due diligence team has no framework for this.

Scenario 5: The regulatory inquiry. A regulator asks what your AI "knows" about your customers, your patients, or your operations. You can explain what data it accesses. You can explain what instructions it follows. But you cannot explain what it has learned through eighteen months of pattern accumulation, because nobody has inventoried that. And "we do not know what our AI knows" is not an answer any regulator wants to hear.

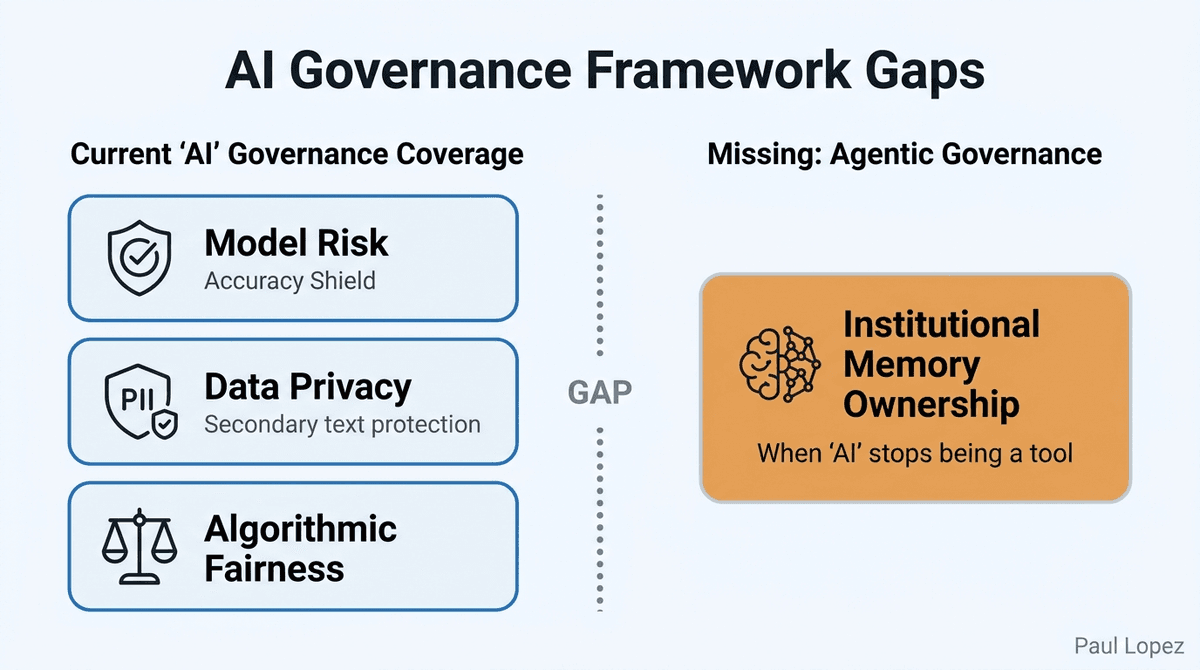

Why Existing Governance Frameworks Miss This

Every enterprise AI governance framework I have evaluated in the last two years addresses three things: model risk (is the AI accurate?), data privacy (is PII protected?), and algorithmic fairness (is the AI biased?). Those are important. They are also incomplete.

None of them address what happens when AI stops being a tool and starts being an institutional memory. None of them ask who owns the agentic cognition an agent accumulates over time. None of them provide a methodology for measuring what that accumulated intelligence is worth, or what you would lose if you had to start over on a different platform.

McKinsey recognized the edges of this problem in their September 2025 report on the agentic organization, noting that enterprises would need to protect proprietary organizational context, institutional knowledge, and nonpublic data for competitiveness. They saw the problem. They did not name it. They did not propose a governance framework for it.

This gap is not a technicality. It is a structural risk. And it is compounding every month because the agents are still running, still learning, and still accumulating agentic cognition that nobody is governing.

The governance frameworks were built for a world where AI was stateless. The world has moved to persistent, context-accumulating agents. The frameworks have not caught up.

What This Means for Your Next Platform Decision

I am not going to propose a solution in this article. That is a longer conversation and it involves methodology that is still being developed. But I will leave you with three questions that every enterprise deploying persistent AI agents should be asking right now.

First: Can you produce, today, an inventory of everything your AI agents have learned about your organization? Not what data they access. Not what instructions they follow. What they have learned through persistent operation. If you cannot, you are governing assets you have not inventoried.

Second: Does your AI platform contract address the ownership of agentic cognition? Not "usage data." Not "logs." The actual learned behavioral patterns and institutional knowledge the agent has developed. If the contract is silent, you have an ownership ambiguity that will become expensive to resolve at the worst possible time.

Third: If you had to switch AI platforms tomorrow, could you quantify what you would lose? Not the migration cost for data and integrations. The cost of the agentic cognition that would be left behind. The institutional intelligence that took months or years to develop and cannot be exported in any format.

If you can answer all three, you are ahead of 95% of the market. If you cannot, you are in the same position as everyone else: running AI agents that are accumulating agentic cognition nobody is governing, on platforms you do not fully control, under contracts that do not address the most valuable asset being created.

At first, you're just another company using AI tools. But after a while, the dependency becomes routine. And by the time you realize how much you depend on what the AI learned, the cost of leaving is a number nobody can calculate.

That is the question. Who owns what your AI learned?