When AI Writes Code Faster Than Systems Can Handle

Modern AI thinks in milliseconds but waits in meetings—creating a "speed tax" that turns digital Ferraris into digital donkeys.

When AI Writes Its Own Code… And You're Just There for the Vibe

Last week, I heard a story about a LLM that analyzed a 200-page legal document in 3 minutes, then people had to wait 47 minutes for their legacy contract management system to process the results. We've built the fastest reasoning engines in history, then chained them to infrastructure designed for human coffee breaks.

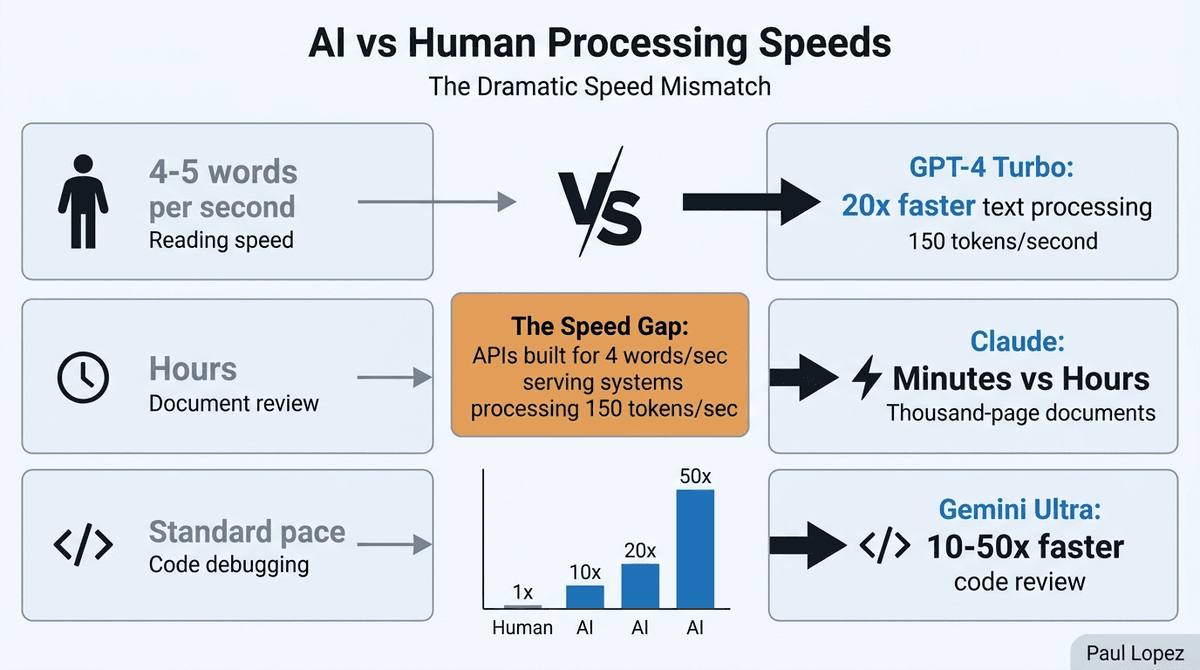

This isn't just a productivity problem. It's an architectural crisis hiding in plain sight. We're asking AI systems that process information at 150 tokens per second to play nicely with APIs designed for humans who read at 4-5 words per second [1]. That's like asking a Ferrari to follow a horse-drawn carriage through downtown traffic.

The companies that recognize this mismatch first will rebuild their infrastructure around AI-native workflows. Everyone else will keep wondering why their expensive AI initiatives feel like expensive consultants: brilliant insights delivered at the speed of bureaucracy.

The Speed Tax Is Real

The acceleration gap between AI capabilities and human-centric infrastructure creates what I call the "speed tax": artificial delays that cost real money. OpenAI's GPT-4 Turbo can process text roughly 20 times faster than human comprehension, while Anthropic's Claude tears through thousand-page documents in minutes versus hours for human experts [1,2]. Google's Gemini Ultra demonstrates 10-50x speed advantages in code review and debugging tasks [3].

But here's where it gets interesting: Berkeley's RISE Lab found that 60-80% of ML pipeline latency comes from data loading and API calls, not model inference [5]. Microsoft's GitHub Copilot study reveals that file I/O operations account for 35% of total response time in coding assistants [6]. We've solved the hard problem (making AI smart) and created a new hard problem (making everything else keep up).

Consider healthcare specifically. An AI system can analyze medical imaging in seconds, but our HIPAA-compliant systems still require human approval workflows designed for paper charts. The AI waits while humans click through approval screens that made sense in 2003 but feel like digital archaeology today.

Stripe's API documentation shows rate limits of 100 requests per second for standard accounts [4]. Perfect for human-driven applications. Completely inadequate for agent workflows that might need to process thousands of transactions in batch operations. It's like designing highways for horses, then wondering why cars keep getting stuck.

The Three-Layer Rebuild

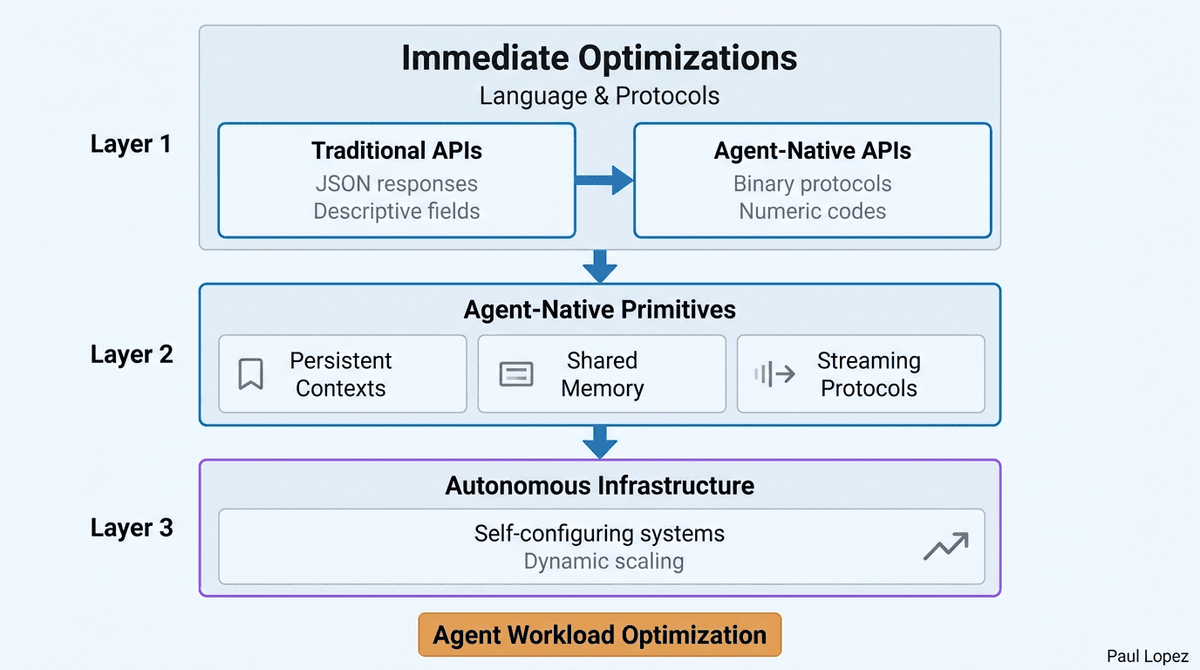

The solution isn't tweaking existing systems. It's recognizing that we need agent-native infrastructure built from the ground up.

Layer 1: Immediate Optimizations Start with language and protocols. Traditional APIs use human-readable JSON responses with descriptive field names and error messages. Agent-native APIs could use compressed binary protocols with numeric codes. The difference matters: Anthropic's Computer Use API eliminates traditional web scraping bottlenecks by operating at the pixel level rather than parsing HTML meant for human browsers [7].

OpenAI's Realtime API provides the template: reduce voice interaction latency from 2-5 seconds to 232ms by eliminating audio transcription steps entirely [8]. The AI doesn't need to convert speech to text and back to speech. It can think in audio.

Layer 2: Agent-Native Primitives This means persistent contexts, shared memory systems, and streaming protocols. Current systems treat each AI interaction as a fresh conversation. Agent-native systems would maintain continuous context across all interactions, like Cursor's codebase showing 3x faster development cycles using persistent context versus traditional IDEs [9].

Think shared state rather than stateless interactions. Instead of uploading the same context repeatedly, agents would reference persistent knowledge graphs that update incrementally. The efficiency gains compound: faster individual operations, better cross-system coordination, reduced redundant processing.

Layer 3: Autonomous Infrastructure Self-configuring systems that scale resources dynamically based on agent workloads. Current infrastructure requires humans to predict capacity needs, provision servers, and adjust configurations. Agent-native infrastructure would monitor its own performance and optimize automatically.

The economic case is compelling: 3x cheaper than traditional approaches while maintaining better performance [7]. But the transition costs are real. Gartner estimates that replacing legacy enterprise systems costs $50M-$500M for Fortune 500 companies [13]. Most organizations lack the technical talent for simultaneous system rebuilds [15].

The Human Evolution Question

Here's where the analysis gets nuanced. MIT's study of human-AI teams shows 85% better outcomes when humans provide strategic oversight versus fully autonomous operation [16]. Stanford HAI research demonstrates that human judgment prevents costly errors in 23% of agent decisions [17].

But there's a crucial distinction between human oversight and human bottlenecks. Strategic decision-making, relationship management, and ethical guardrails add value. Clicking approval buttons and manually transferring data between systems just slow things down.

Financial services regulations explicitly require human oversight and audit trails [10]. GDPR and emerging AI regulations may require human-interpretable interfaces for compliance [12]. The goal isn't eliminating humans. It's promoting them from data entry clerks to strategic orchestrators.

Current LLMs still hallucinate 15-25% of factual claims, requiring verification loops [19]. Multi-agent coordination research shows coordination overhead scales quadratically with agent count [20]. The infrastructure needs to accommodate human oversight without creating human bottlenecks.

The Pragmatic Path Forward

The transition will be evolutionary, not revolutionary. IPv6 adoption is still only 37% after 25 years [14]. Complete infrastructure overhauls take decades. But competitive advantages emerge much faster.

Start with internal tools where regulatory constraints are lighter and error costs are lower. Move to customer-facing systems gradually. The companies rebuilding now will have 2-3 years before competitors catch up. That's enough time to establish significant moats in operational efficiency and customer experience.

Domain-specific approaches work better than universal solutions. Healthcare systems will maintain human oversight longer due to regulatory requirements, while financial trading systems might automate more aggressively. Software development and content creation represent early opportunities with lower regulatory barriers.

The infrastructure changes will be as significant as mobile or cloud transitions. Mobile didn't just shrink desktop applications. It created entirely new interaction patterns. Cloud didn't just move servers offsite. It enabled completely different architectural approaches. Agent-native infrastructure won't just speed up existing workflows. It will enable workflows that are currently impossible.

Watch for the early signals: API redesigns that prioritize machine readability, authentication systems that work for persistent agents, and development tools that assume AI-first workflows. The companies making these investments now are building the platforms that will define the next decade of competitive advantage.

The speed tax is optional. The question is whether you'll pay it or profit from eliminating it.

References

[1] OpenAI. (2024). "GPT-4 Turbo Performance Benchmarks." OpenAI Technical Report. [2] Anthropic. (2024). "Claude's Document Analysis Capabilities." Anthropic Research Papers. [3] Google DeepMind. (2024). "Gemini Ultra: Code Review and Debugging Performance." Nature Machine Intelligence. [4] Stripe. (2024). "API Rate Limits Documentation." Stripe Developer Docs. [5] Moritz, P. et al. (2024). "Latency Analysis in Machine Learning Pipelines." Berkeley RISE Lab. [6] Microsoft Research. (2024). "GitHub Copilot Performance Analysis." MSR Technical Report. [7] Anthropic. (2024). "Computer Use API: Direct System Interaction." Anthropic Blog. [8] OpenAI. (2024). "Realtime API: Low-Latency Voice Interactions." OpenAI Documentation. [9] Cursor Team. (2024). "Development Velocity Metrics." Cursor Technical Blog. [10] Financial Industry Regulatory Authority. (2024). "AI Governance Requirements." [13] Gartner. (2024). "Enterprise System Modernization Costs." Gartner Research. [14] Google IPv6 Statistics. (2024). "Global IPv6 Adoption Rates." [15] McKinsey & Company. (2024). "The State of AI in Enterprise Infrastructure." McKinsey Global Institute. [16] MIT Computer Science and Artificial Intelligence Laboratory. (2024). "Human-AI Collaboration Outcomes." [17] Stanford Human-Centered AI Institute. (2024). "Human Oversight in Autonomous Systems." [19] Anthropic. (2024). "Constitutional AI: Reducing Hallucinations." Anthropic Safety Research. [20] Berkeley AI Research. (2024). "Multi-Agent Coordination Overhead." BAIR Technical Report.